The Python client is generated using Airflow's openapi spec. Cloud Composer API, Manages Apache Airflow environments on Google Cloud Platform. #Airflow api fullSee README for full client API documentation. The Google APIs Explorer is is a tool that helps you explore various. Print( "Exception when calling ConfigApi->get_config: %s \n" % e) # Get current configuration api_response = api_instance. Similarly, it is also possible to use the REST API for the same result (e.g., in case you have no access to the CLI but your Airflow instance can be reached. # Create an instance of the API class api_instance = config_api. The aim is to let the Airflow orchestrate my. # Enter a context with an instance of the API client with airflow_client. I would like to connect my Airflow application with my Apache Nifi application though the Apache Nifi API. # Configure HTTP basic authorization: Basic configuration = airflow_client. Due to how this is done it is possible that the API will have behavior differences from UI. Airflow itself doesn't abstract any logic into reusable components so this API will replicate application logic. Make sure: # - Airflow is configured with the basic_auth as backend: # auth_backend = .basic_auth # - Make sure that the client has been generated with securitySchema Basic. Airflow Plugin - API This plugin exposes REST-like endpoints for to perform operations and access Airflow data. api import config_api # In case of the basic authentication below.

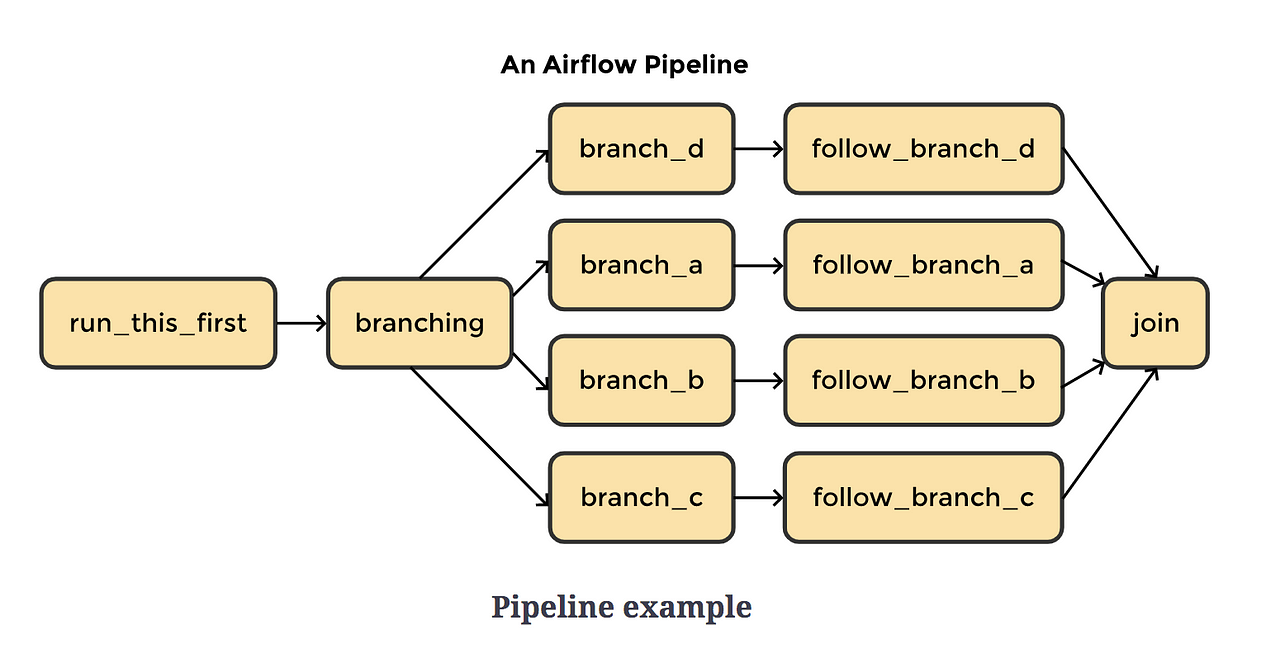

client from pprint import pprint from airflow_client. In this tutorial, you will learn what the Taskflow API is, why it. The Taskflow API has three main aspects: XCOM Args, Decorator, and XCOM backends. That helps to make DAGs easier to build, read, and maintain. As you will see, you need to write fewer lines than before to obtain the same DAG. The easiest way to enable this is in the Airflow UI.Import airflow_client. Airflow Taskflow is a new way of writing DAGs at ease. Navigate to your terminal and launch your apache web server with the command “docker-compose up”, then, navigate to port number]/ from your browser.įirst thing we need to do is to add the Rivery API as a connection in Airflow. Apache Airflow is an extensible orchestration tool that offers multiple ways to define and orchestrate data workflows. This will be the only time you will be shown this info, so copy/paste or write it down and keep it in a safe place: IMPORTANT: After you name your token you will be shown a screen with your unique token identifier. Select the button, give it a name of your choice, and hit “Create”. In order to do this, log into your Rivery account and select the button from the left hand panel. However, it’s easy enough to turn on: authbackend .denyall authbackend .basicauth Above I am commenting out the original line, and including the basic auth scheme. yml docker file and configuring a local webserver to run Apache Airflow.įirst, we need to create Rivery API credentials. Step 1 - Enable the REST API By default, airflow does not accept requests made to the API. This tutorial assumes a basic familiarity with Apache Airflow and Docker, including creating a containerized environment using a. While Rivery can stand on its own as a fully fledged data integration/management/orchestration tool, part of Rivery’s value comes from its adaptability to existing organizational data architecture. Airflow task dependencies Image created by Author. The first and the last are stand-ins for other operations we might want to schedule / execute from Airflow to prepare or finalize tasks. #Airflow api how toIn this tutorial, geared toward advanced users coming from a data engineering background, we walk through how to enable and execute Rivers using the Apache Airflow platform to better integrate Rivery with existing enterprise data engineering architecture. Airflow configuration The Airflow DAG will consist of four tasks: the two in the middle are interacting with Apache NiFi’s API. We believe that Airflow’s customizability, dynamic nature, and scheduling options, combined with Rivery’s intuitive UI for building ELT pipelines in the cloud make for an exciting combination that would allow - for the first time - both technical and non-technical teams in an organization to build data workflows. Airflow’s scalability, extensibility, and integration with devops tools such as docker has made it the go-to platform for data engineers to build data ingestion and transformation workflows. You can read more about how to set up authentication credentials to enable the Rivery API here.Īpache Airflow is a very popular open-source python based platform used to author, monitor and schedule workflows. The Rivery API is an API that allows you to integrate the functionality of Rivery’s platform into other applications or schedulers, written in other programming languages. Using the Rivery API to schedule data transformations in Apache Airflow

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed